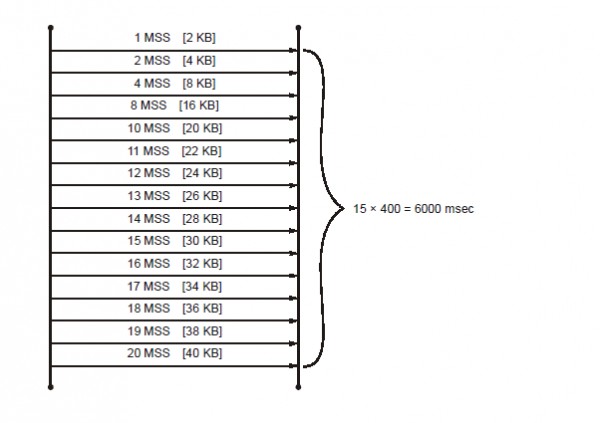

Assume a scenario where the size of congestion window of a TCP connection be 40 KB when a timeout occurs. The maximum segment size (MSS) be 2 KB. Let the propagation delay be 200 msec. The time taken by the TCP connection to get back to 40 KB congestion window is _________ msec.

I think answer should be 5600. But 6000 is given. They have added the last RTT also. Will it be added?