Need verification from Arjun Sir,

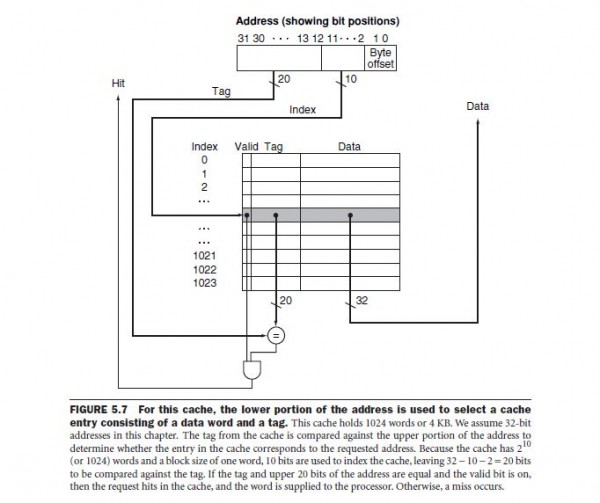

For (A)Direct Mapped Cache:

So, hit latency comes to be Line decoding time(Assume 0 if not given) +Tag comparison time+AND GATE delay.

AND gate delay is not given so assume it to be zero, Hit time=$\frac{17}{10}=1.7ns$

Since other delays which were present, but assumed to be zero(either not given or told to be zero), the cache hit time must be atleast 1.7ns here should be the correct way to say this.

(B)Set Associative cache design:

So, here we can see that after the Tag comparison is done, and assuming AND gate delay to be zero, we see that the multiplexor uses the lines of the tag comparison result as it's input to decide which one of the block of the set must be selected. So, After TAG comparison is done, MUX and the or gate can produce the result in parallel.

Also, note that the set decoding time if given is to be taken into account while calculating hit time.

So, here Cache hit time=$1.8(tag)+0.6(\,or\,+MUX\,delay\,in\,parallel)=2.4ns$ (Remember cache hit time is atleast this).

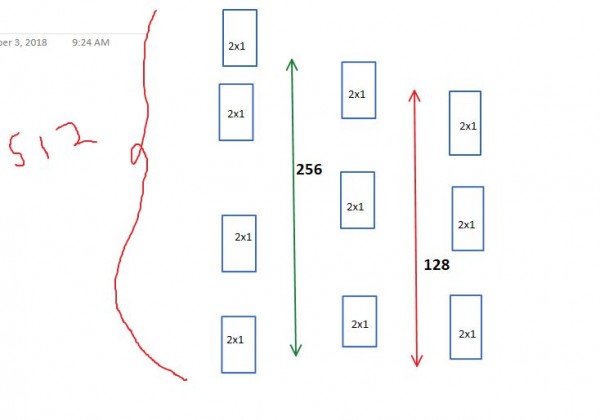

(C)Associative Cache: So, here my cache has $2^{10}$ lines and each tag has 27 bits. So, for all $2^{10}$ lines, the tag comparison will be done in parallel and this will take $2.7ns$. After this, we will have 1024 lines of tag comparator output. Since now we need to select 1 block out of 1024, we need the functionality of a 1024X1 MUX. But we have only 2X1 MUX.

So, my 1024x1 MUX, can be implemented like below

Image URL:https://gateoverflow.in/?qa=blob&qa_blobid=15779298586899863341

So, we need 512 in count 2X1 Mux at first level to select out of 1024 lines,

256 at the second level,128 at third,64 at fourth,32 at fifth,16 at sixth,8 at seventh,4 at eight,2 at level 9, and finally 1(a single) 2x1 mux at 10th level.

So, total 10 level of multiplexing needed and this will take $10*0.6=6ns$

So, cache hit time here=$2.7+6=8.7ns.$